50,000 names! (Well, nearly…)

The Names team have just finished processing data from the Open University’s Open Research Online repository. When the researchers’ names from Open Research Online were added to the existing Names data, there were 50,002 individuals identified in the Names system.

The Names team have just finished processing data from the Open University’s Open Research Online repository. When the researchers’ names from Open Research Online were added to the existing Names data, there were 50,002 individuals identified in the Names system.

The matching algorithm developed by the Names project’s Dan Needham does a good job of comparing new names to those already in the system, matching up individuals based on their names, affiliations and the titles of their papers. The algorithm errs on the side of caution, however, to avoid wrongly matching people. This means that some individuals who are already in the system might not be matched up correctly.

As a result, with the OU data, we had some 850 names (out of 2,243) to check against potential matches. Most of these were not actually matches, but a sample of 10% were checked and this sample showed that around 12% of the potential matches were actual matches. To ensure the quality of the data, we decided to check the whole batch and this manual process determined that 108 of the possible matches did indeed match existing individuals in the Names system. Human intervention is the best way of ensuring the quality of data in these cases – automation can achieve a fair degree of accuracy in matching individuals, but in some cases it’s essential to have a person looking at potential matches to determine whether they really are a match or not. Sometimes it is obvious, but there were several in this batch where some additional research was needed to be absolutely sure.

The matching of those individuals left us with a total of 49,894 uniquely identified individuals in the Names database. It would have been nice to have been over the 50,000 mark – but the data would have been poorer quality if we’d left it as it was…

P.S. Come to think of it, if we include the identifiers for the 158 research institutions in Names, then we are over the 50,000 (50,042 to be precise). Yay!

LSE researchers now identified in Names

In the past few weeks the Names team have been working with colleagues at the London School of Economics to uniquely identify individuals who have been involved in research at their institution. As with our previous work with the University of Huddersfield, this involved analysing the contents of LSE’s institutional repository, LSE Research Online.

By processing the RDF data which is automatically provided by the repository’s EPrints software, we were able to compare the information within it against the existing information in Names about LSE authors. Where individuals had already been identified from the Merit 2008 Research Assessment Exercise data, the repository information usually provided additional details to augment the Names records, including first names and other titles of papers that individuals had worked on. For individuals who were not already in Names, we created new records and assigned identifiers to them.

The Names disambiguation algorithm does a good job of automatically matching information from repository data with existing Names records, but it is configured to err on the side of caution in making matches to avoid making false connections between individuals who may have similar names but are not the same. This creates some extra work for the quality assurance process (which is undertaken by our colleagues at the British Library) , as it generates a list of potential matches which have to be checked manually. This is worth doing, however, as it ensures that the resulting data is more reliable than it would be with just an automated check. The more data is added to Names, the smoother the matching process becomes, as there is more information in the system to compare against each new source of data.

In the record below, the original Merit record has been enhanced with information about the individual’s first name and with the identifier from the LSE repository. Already this person has four separate identifiers assigned to him: the local LSE one, the national Merit one derived from the 2008 Research Assessment Exercise, the Names identifier (15711) and the international ISNI identifier. We’re also currently investigating the best way of linking this data up with the other big international initiative, ORCID.

Colleagues at LSE plan to add the Names identifiers to their local name authority file for use within the institution. I’d like to note here that working in collaboration with the LSE staff helped to improve data both in Names and at the repository. The experience has also helped us to speed up and fine-tune the quality assurance process at the Names end.

In total there are now 1,005 individuals identified in Names who are affiliated with LSE. 463 of these were new identities created from information in LSE Research Online and 413 were existing Names records which have been improved with additional information from the LSE repository.

New data from the University of the West of England

Last week new data was added to Names from the Research Repository of the University of the West of England. This repository runs on the EPrints platform and we extracted information from its RDF output as we did for the University of Huddersfield’s repository earlier this year.

With the help of the quality assurance team at the British Library, 786 Names records were either created or enhanced with information from the UWE repository. In many cases for existing records we have been able to add first names where we previously only held initials, for example.

In total there are around 821 individuals with an affiliation to UWE who now have a unique identifier within the Names system.

The next data source we’ll be investigating is the aggregated data in the Institutional Repository Search service – but we’re always keen to work with individual repositories, so if you’d like to get your contributors included in Names, please get in touch.

Importing The University of Huddersfield’s researcher information.

One of the main avenues through which we hope to build up the Names core record set is through harvesting information about researchers at the repository level. There are currently two methods by which a repository can make their data accessible for use within the Names project. The first method is to submit their data to us by producing a data extract of their researcher information that conforms to our Data Format Specification. The second method requires the institution to be running EPrints 3.2.1 or above as their repository software, and was recently explored with The University of Huddersfield.

EPrints 3.2.1 and above provides semantic web support, including data export in RDF+XML. By developing specific classes to read the data output using Jena we are able to harvest data from the source to be used by our matching and disambiguation algorithms against the existing Names records. To test this out we recently collaborated with The University of Huddersfield to try and extract and disambiguate the creators from their EPrints repository.

The first, and simplest step, was to export Huddersfield’s EPrints data as RDF from their repository (http://eprints.hud.ac.uk/id/dump). Once we had done this we could easily process the resulting RDF+XML file, using our disambiguation algorithms to try and match creators identified in the document against existing individuals identified within the Names Service. Two types of creator were defined in the RDF dump: those that were internal (belong to the institution) and those that were external (don’t belong to the institution). Because the amount of disambiguating data pertaining to the external individuals was limited we decided to only process internal creators to help increase accuracy of the results, and reduce the noise of creating many files with sparse information.

Processing and testing of the Huddersfield data has been an iterative process, and we used the exercise to both contribute to our records and also help improve the accuracy of our disambiguation algorithms. After an initial run we managed to identify ~550 unique individuals, but we needed to quality assure these results in order to ascertain how accurate the matching was. In order to do this, two reports were produced, one containing potentially mis-matched records (records which contained information from two or more individuals), and one containing potentially non-matched records (separate records which contain information about the same individual). We discovered around ~300 potential mis-matches and ~200 potential non-matches.

A team at the British Library with specialist skills were made available to quality assure the results, analysing each of the potential mis-matches to see whether an actual mis-match occurred, and analysing a sample of the potential non-matches to see whether a match should have occurred and why. The results of the mis-matches were encouraging, with 0 mis-matches found, however the results of the non-matches indicated that around 80% of the potential non-matches were actual non-matches.

Using this information we were able to fix a software bug, and also make further tweaks to the disambiguation algorithms to reduce the level of non-matches. After a further round of quality assurance by the British Library we discovered that we had reduced the number of non-matches to around 50% and the remaining cases were deemed impossible to match by automated means. These final records were merged manually.

Once all identified individuals had either been matched with an existing names identifier or assigned a new one we were able to return a list of assigned names identifiers to Huddersfield.

Some of the identifiers and records created or added to as part of this exercise are listed here:

1. http://names.mimas.ac.uk/individual/46934 (a record created purely from Huddersfield data)

2. http://names.mimas.ac.uk/individual/6831 (a record which already existed, but had Huddersfield data merged into it).

Data submission specification

We’re getting a good response from repositories and institutions who would like to provide information about their researchers to improve the data in the Names system (see previous post for details). As a consequence, a data submission specification has been drawn up by Dan Needham. This lists the mandatory and optional fields that Names needs in order to create or match records for institutional staff. It also explains the best way to format your records.

The table below shows the information we’d like to receive from institutions:

| Field | Description | Requirement |

| Primary author family names | Mandatory | |

| Primary author given names | Mandatory | |

| Primary author title | Name prefix / salutation e.g. Mrs, Dr, Sir … | Optional |

| Primary author date of birth | YYYY-MM-DD | Optional |

| Primary author date of death | YYYY-MM-DD | Optional |

| Primary author fields of interest | Semi-colon delimited list of strings describing fields of interest associated with the individual. Preferably values taken from a controlled of terms, although this is not required. | Optional |

| Primary author home page | URL of web page that contains information that helps identify the individual e.g. personal homepage, institutional page, linkedin page | Optional |

| Primary author internal identifier | Your internally used identifier. | Optional |

| Primary author external identifiers | A list of identifiers from other providers assigned to an individual. The list should be semi-colon delimited and contain alternating values for the identifier provider and the identifier itself, i.e. <source>;<identifier>;<source>;<identifier> | Optional |

| Result publication title | Please generate a complete new row for each publication given for each author. | Mandatory |

| Journal title | Optional | |

| Volume | Optional | |

| Issue | Optional | |

| Start page | Optional | |

| End page | Optional | |

| Year of publication | YYYY | Optional |

| Subject area | Classified subject area, may be different from author’s field of activity. | Optional |

| ISSN | Optional | |

| DOI | Optional | |

| Co-authors | Semi-colon delimited list of co-author names. May include the primary author name if necessary. Preferably in the format <family name(s)> , <given names(s)> however if necessary another format is acceptable as long as there is consistency. | Mandatory |

There’s also an example record in the required format to illustrate this.

Improving Names data with help from institutions

The MERIT data has given us a good corpus of UK researchers’ names to use as the basis of the Names prototype. There are around 45,000 and most have institutional affiliations associated with them, too, which makes them a rich data set. What they don’t have, generally, are full names: they’re usually just surnames and initials.

This is where we need help from UK institutions to improve the data and we’ve recently been testing this process with some information supplied by Robert Gordon University (RGU) in Aberdeen. Researchers at the university were contacted and the aim of the process explained. Having cleared things with their researchers, staff at RGU then extracted information from the OpenAIR institutional repository for staff that were willing to be involved and sent them to the Names team in table form, listing surname, forename(s), publication title, date and publication type.

This data was then matched with existing Names data. 17 names were found to match with individuals already in the database, based on names and article titles. The other names were not in the database: new Names records and persistent identifiers were created for these individuals. Quality assurance on the results of the matching process was carried out by colleagues at the British Library.

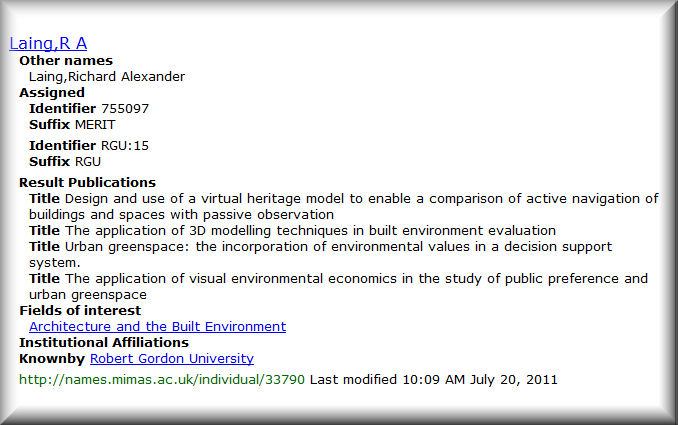

In this example, the record for R. A. Laing has been enhanced with the researcher’s full forenames and with additional publications (only the first listed publication was supplied by the MERIT database). This additional information will assist in the establishment of future matches with further sources of names data that become available to the Names team.

If your institution is interested in providing similar data to improve the Names records for your researchers, then we’d love to hear from you. You can contact Dan Needham, the project’s lead developer at daniel.needham@manchester.ac.uk, or if you have any questions, please email project manager Amanda Hill. We can supply you with a sample email which will introduce the project to researchers in your institution if, like RGU, you want to tell them about it.

EPrints plug-in project

The Names Project has recently started a new project-within-a-project to build some Names functionality into the EPrints repository software. The survey of UK repository managers undertaken by the project in 2010* showed that a majority (41% of respondents) were using EPrints to run their institutional repositories. This finding is confirmed by the OpenDOAR directory of repositories, which shows EPrints in use by 45% of the 193 repositories it lists for the country.

Incorporating Names into EPrints could be useful, therefore, for a large number of repositories in the UK. Our initial plans include developing the following features:

- Adapting the existing auto-completion field within EPrints to make a call to the Names API to bring back potential matches from the Names data (and distinguishing them from internal matches in some way)

- Associating the Names identifier with creator metadata within EPrints

- Allowing the update of existing materials within the repository to associate creator names with their Names identifier and to allow for searching by that identifier, so that all records for an individual can be retrieved, regardless of the form of the name

- Modifying output records from EPrints to include the Name identifier

- Packaging the resulting plug-in so that it can be made available through the EPrints Bazaar.

Any comments, suggestions, pitfalls you can foresee with this approach?

*Report on the survey [PDF]

Names of Merit

How vain, without the merit, is the name!1

At the end of October 2010, the Merit project made its cleaned-up version of the 2008 Research Assessment Exercise (RAE) data available through the project’s website. This data set includes names of the top researchers in the UK (Stephen Hawking, for example, Monica Grady or Brian Cox), with the titles of the materials that were submitted for assessment by their institutions. It seemed to be an ideal set of information for the Names Project to use, as the information includes institutional affiliations, which is not easy to track down from other data sources we’ve been investigating, such as the Zetoc table of contents data from the British Library.

Names records have now been generated for all of the individuals represented in the Merit data. This creates a core of nearly 47,000 disambiguated names of UK researchers for the project, associated with 158 institutions. As a result of the earlier work of the Merit project, the quality of the data was good. There were occasions where individuals had more than one identifier in the Merit data (when their work had been submitted by more than one institution), but these were successfully identified and merged in the disambiguation process.

Our British Library colleagues’ quality assurance process identified only one case where the system wrongly suggested a match. There were two D. J. Siveters listed in the data, one at the University of Leicester, the other at the University of Oxford, both writing on the subject of palaeontology. A little investigation revealed that these were in fact two distinct individuals: twin brothers (Derek and David) working in the same field who often co-author papers. Perhaps these two form the ultimate test of any disambiguation mechanism?

Our British Library colleagues’ quality assurance process identified only one case where the system wrongly suggested a match. There were two D. J. Siveters listed in the data, one at the University of Leicester, the other at the University of Oxford, both writing on the subject of palaeontology. A little investigation revealed that these were in fact two distinct individuals: twin brothers (Derek and David) working in the same field who often co-author papers. Perhaps these two form the ultimate test of any disambiguation mechanism?

The project team are intending to share the records generated by this process with colleagues working on the ISNI (International Standard Name Identifier) to see if they can identify matches with records in the ISNI data.

1Homer’s Iliad, Book XVII, translation by Alexander Pope, 1715

leave a comment